Linear Algebra

Generally speaking, linear algebra is the analysis of vectors, matrices, and linear transformations. Linear algebra concepts are essential for machine learning.

A typical user of different artificial intelligence is probably not thinking about vectors or matrices, and it's admittedly a bit intimidating to break down the processes behind artificial intelligence. But ultimately, it's important to understand that our applications of AI aren't magic — they're math.

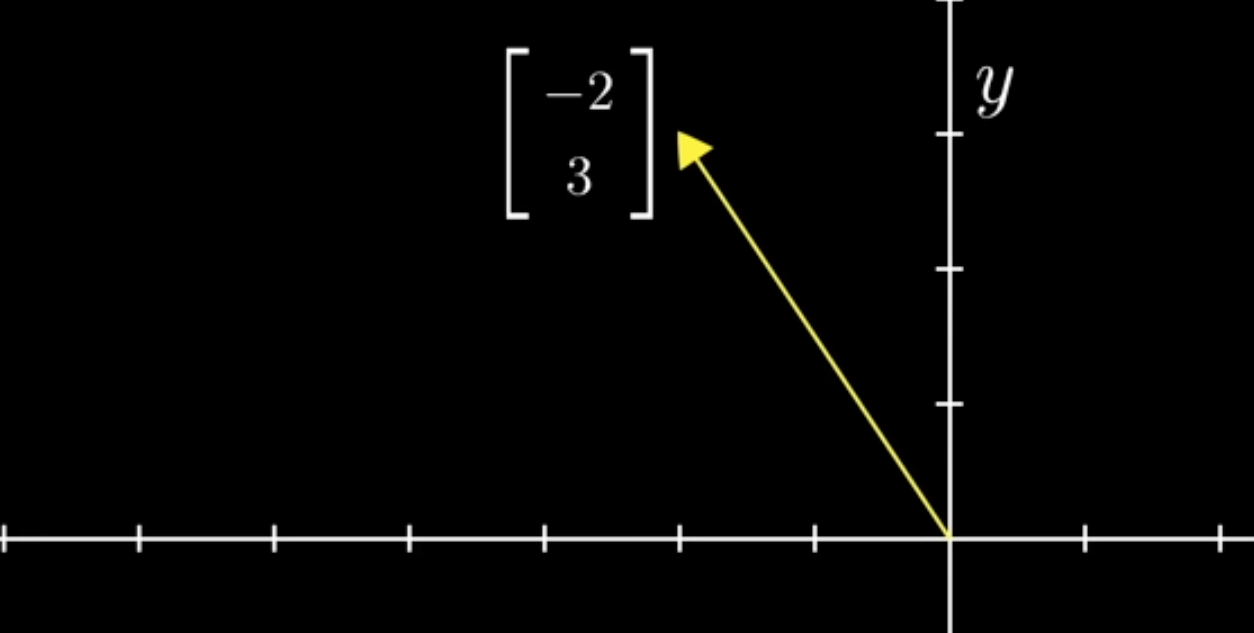

Vector

A vector is a line with magnitude and director. And for large language models, words — or more specifically tokens — are transformed into vectors called embeddings.

An embedding is a much more complicated vector than the one depicted below. But remember a 2-dimensional vector is a line with an x and a y. It is often easier to think of a vector as a list of numbers. Similarly a matrix can be thought of as a grid of numbers.

In Action: A linear regression model

Let's think about linear algebra in terms of an equation for a line: y = mx + b. Where y is the output, m is the slope, x is the input, and b is the y-intercept. Yes, this equation that's been forced on you since your youth has applications in machine learning.

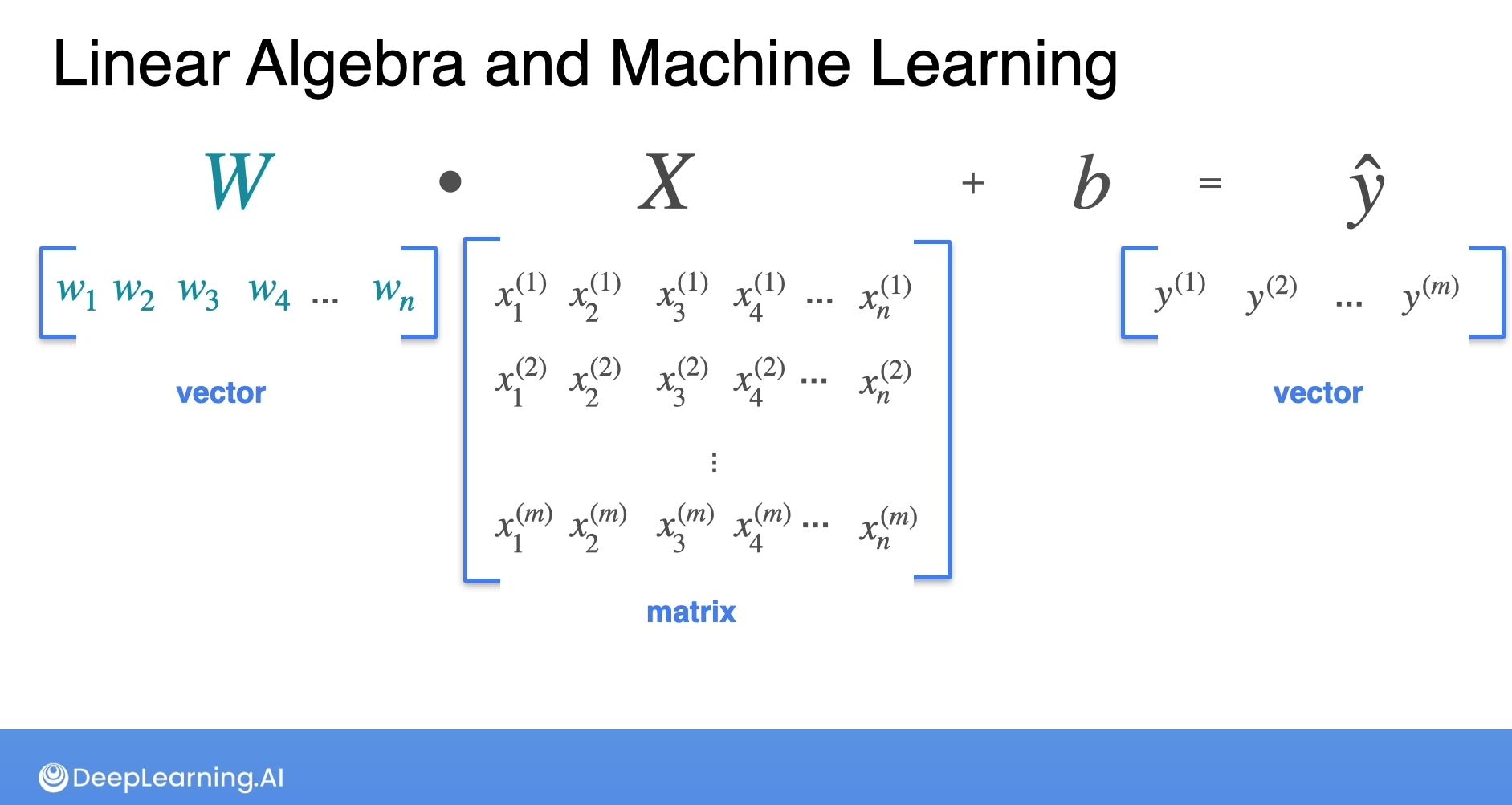

The image below is a screenshot is from a DeepLearning.AI video that walks through how to predict electrical power output. It depicts how a vector-w multiplied by a matrix-x plus a bias-b equals an estimated output y.

The rows of the matrix-x could represent different days of data collection, and the columns represent the different features — such as wind speed, temperature, humidity, etc. The vector-w is the weights. To be clear, the process of training a model is figuring out what those weights should be in order for equation to make accurate predictions.

Each number in a row is multiplied by a corresponding weight and the bias term 'b' is added, which is then used to predict the power output.

If the image above is intimidating, do not worry. But it's important to recognize that shapes matter in linear algebra. The width of vector-w is the same as the length of matrix-x. If they were different, this equation wouldn't work.